Developing New Tools for Educational Researchers

by Tom Hanlon / Oct 28, 2020

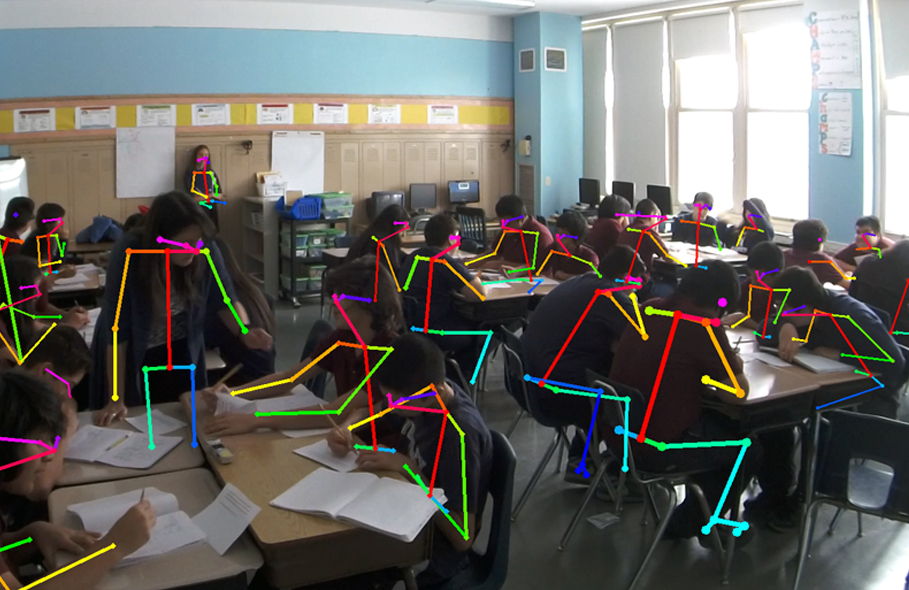

OpenPose, an open source real-time pose detection software (Cao et al., 2017), applied to video from a high school math classroom. The team is using this software to extract pose and location data which they can then use to develop algorithms to automatically detect potentially meaningful types of interaction, such as how a teacher is moving around the room or interacting with groups of students.

A team at the University of Illinois is working on developing and refining the tools that will aid educational researchers in studying teaching and learning environments—and ultimately in improving those environments.

Just as archeologists need tools to help them excavate sites and analyze artifacts to reveal stories of human history, so educational researchers need tools to help them analyze learning environments to improve learning and teaching.

And three professors from the College of Education are helping to build those educational research tools.

“Our goal is to develop research methodology to support researchers in conducting their own research better,” says Stina Krist, an assistant professor in the Curriculum & Instruction Department. “To that end, we’re thinking about ways of integrating computational methods—specifically, some computer vision techniques and audio analysis techniques—with qualitative methods that have been typically used to analyze data in education, with the goal of using the computational techniques to do things that are hard for human analysts to do.”

Developing Computer Vision and Speech Analytics Tools

Krist, along with assistant professors Cynthia D’Angelo and Nigel Bosch, both in the Educational Psychology Department, have been working on a $1.3M grant from the National Science Foundation. They are a little more than a third of the way through the three-year grant as they build on state-of-the-art computer vision and speech analytics methods that are being tested on video data collected in high school math classrooms.

“We have something like 600 hours of video,” Bosch says. “That’s not an exceptionally huge data set, but it’s intractable for a human to watch closely and make judgments. So, we’re using computer strength to help us fast forward through that video.”

Producing Scalable Data

The research team is attempting to produce at a scalable level the type of data qualitative researchers need to answer various questions, D’Angelo says. “We’re looking at issues like teacher movements, class configurations, how the teachers probe for understanding among students, are they asking them low inference questions, what kinds of talk patterns are happening,” she says. “There are things around participation patterns that can tell us a bit about how the class is being run, what kind of conceptual knowledge is being addressed—is it a lot of short answers and the teacher is doing most of that talking—that tell us what kind of teaching and what kind of learning is happening versus other kinds of patterns.”

The team has found that some tools, such as person detection and audio analysis tools, worked better than they thought, Krist says. “That was great,” she notes. “We don’t have a ton of redevelopment of existing tools, which could have been a possibility.”

That frees them to consider good proof of concept cases from the video, she adds. “What’s a question that has impact in education, something that we can use some of these computational tools to analyze and couple it with some qualitative coding to answer the question that we might otherwise not have been able to answer?”

The qualitative analysis team, Krist says, has been doing “low-level” content logging of the video to spur additional ideas on the questions educational researchers need to ask and how the tools the team is developing can aid in answering those questions.

The power in the research, Krist says, is that “we can do this at a scale where we can start to see some correlational patterns that would have just been question marks until we had done a lot of really rigorous human qualitative coding.”

Next Steps and Deliverables

Next steps in their research include focusing on the case example they want to work on and the specific computational and audio tools they want to refine to analyze those moments, Krist says.

D’Angelo adds that the team will come up with guidelines for researchers on how to collect the data and make sure it’s at a high enough quality to be valuable. “One of the things we want to have available at the end is material on ‘Here’s how you do this, if you’re interested in small groups in classrooms, here’s how to collect your data so you can use your tools appropriately,’” she says. “And one of the co-principal investigators is working on an R package; R is a statistical programming language that a lot of researchers use. That will be available to help people use the tools and analyses at the end.”

It’s likely that the benefits for researchers won’t stop when the grant concludes in 2022. “I already have in mind applications for how we can maybe use this in other projects,” Bosch says. “But that’s part of the future work.”